Devoxx: Crédit Agricole combines AI, Clean Code and software quality

At the 2026 edition of Devoxx, in Paris, the group presented two complementary feedback on the evolution of software development. The first shows how AI fits into the application cycle, from the expression of need to testing. The second reminds us that this acceleration must be based on solid engineering foundations: readable code, controlled design, automation and team responsibility.

During the presentation “AI in the software lifecycle: what opportunities, what limits?”, Raphaël Uzan, Lead Data/AI Engineer at Crédit Agricole S.A., first put AI back on a trajectory already underway within the group. Uses are no longer limited to a few specialized projects. They now cover fraud detection, OCR (Optical Character Recognition), automatic invoice validation, chatbots, document synthesis tools and development assistants.

This progression directly transforms the SDLC (Software Development Lifecycle). AI can intervene in the formalization of the need, functional design, technical architecture, code generation, testing, deployment, maintenance and monitoring. In a banking group, however, this extension remains subject to a requirement of reliability. Raphaël Uzan put it this way: “In ten years, AI has gone from server rooms to the workstations of each employee.”

This diffusion cannot therefore be reduced to a logic of individual productivity. It requires integration into development chains, with standards, controls and responsibility assumed by IT teams. The topic is as much about efficiency as it is about the ability to maintain trust in the systems produced.

Formalize the need before automating

Ghizlane Pacheco, Senior Business Analyst/Data Product Owner at Crédit Agricole Corporate & Investment Bank, then addressed the first stages of the software cycle. His intervention starts from an operational observation: many difficulties appear even before the writing of the code. Requirements may remain ambiguous, functional or non-functional constraints may lack precision, practices may differ between teams, and documentation may lose value over time.

AI can help address these vulnerabilities if it is based on rigorous analysis. Ghizlane Pacheco presented the use of specialized agents to produce documents such as PRD (Product Requirements Document) or BRD (Business Requirements Document). These deliverables are used to describe the intent, expected value, scope, affected users and acceptance criteria.

The approach is based on an iterative exchange. The officer starts from an intention, asks questions for clarification, receives human answers and then refines the document. Validation does not disappear: it becomes a stage of control and arbitration. Ghizlane Pacheco insisted on this point: “Human revision, present at every stage of the process, is our first guarantee of quality”.

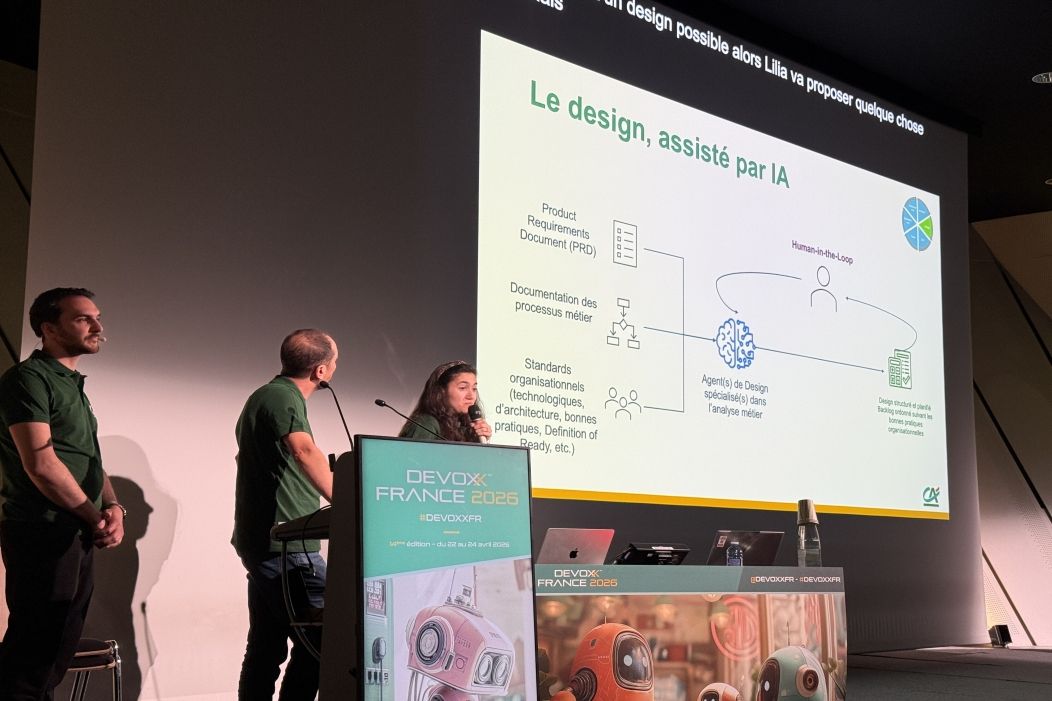

This logic extends into functional and technical design. A model can offer a coherent architecture, but it must integrate the company's technology catalog, architecture rules, backlog best practices and the “definition of ready” (criteria that indicate that an element can be developed). In this context, AI risks producing a plausible response, but one that is not adapted to the real information system.

Agents integrated with developer tools

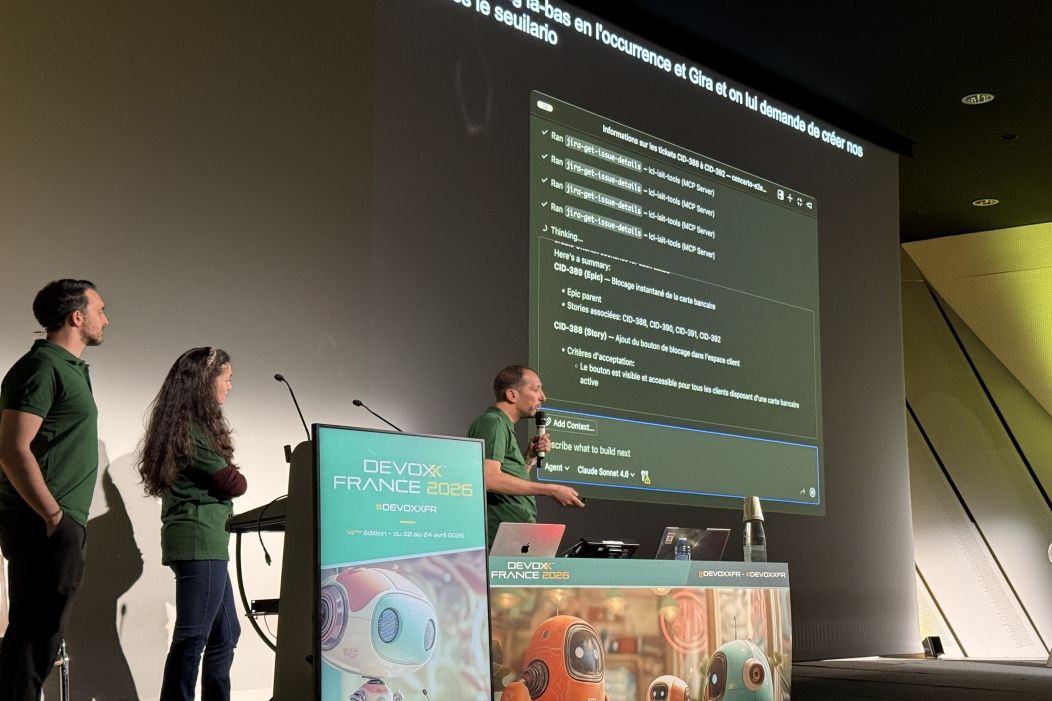

Aïssa Bouhadjar, Tech Lead at LCL, illustrated the integration of AI into the daily tools of the teams. From a banking user story, for example the instant blocking of a card in case of loss, an agent can generate acceptance criteria and Gherkin scenarios. Gherkin refers to a language for describing the expected behaviors of an application, often used in BDD (Behavior-Driven Development). It formalizes the scenarios in a form readable by the professions, testers and developers, with a structure of the type “Given”, “When”, “Then”.

The interest lies in the connection with existing tools. Agents can retrieve tickets in Jira, save scenarios in Octane, leverage project context in VS Code, and interact with certain environments through Model Context Protocol (MCP), a protocol that allows a model to access controlled tools or resources. Aïssa Bouhadjar recalled the need for human control: “The scenarios generated may or may not be correct, depending on the criteria of the operator or the applicant. The principle of ‘human in the loop’, which corresponds to human validation, then intervenes to ensure their relevance’

This logic also covers development. Agents can produce or enrich a development plan, README, technical documentation, source code, unit tests, and integration tests. In a CI/CD chain (Continuous Integration / Continuous Delivery), they can intervene during a pull request (request to review and integrate code changes into a software project) to propose improvements. The final decision remains with the developers, especially when the issues affect safety, compliance or production quality.

The Clean Code as a safeguard against acceleration

The ease of production, however, carries a risk: generating more code can increase the review, understanding and maintenance burden. Raphaël Uzan summed up this limitation with a clear formula: “The trap is precisely this ease of production, which can give an illusion of control.”

The “Clean Code Matters” conference, presented by Marc Lecanu, Software Engineer at LCL, responds directly to this challenge. His intervention reminds us that a code is not only valid because it works. It must be readable, understood, edited and maintained by other team members. Using an example based on a Pokémon sorting method, Marc Lecanu shows that seemingly simple code can mask erroneous behavior if naming, intent and testing do not converge.

The Clean Code is therefore not an aesthetic preference. It is a collective discipline. Marc Lecanu gives an operational definition: “The Clean Code designates a code that can be read and maintained by any member of the team”. This approach starts with naming. A name must reveal intent and provide context. Overly generic terms weaken understanding. Hard-coded values should be replaced with explicit constants or enumerations.

The functions require the same vigilance. A function that is too long often indicates a perimeter that is too wide. An excessive number of arguments complicates the use. Booleans can indicate that several behaviors coexist in the same method. Marc Lecanu also recalls two simple principles: KISS (Keep It Simple, keep it simple) and DRY (Don't Repeat Yourself, avoid duplication). In a context where AI can quickly produce code variants, these safeguards limit the dispersion of application logic.

From design principles to production responsibility

Marc Lecanu also presented the SOLID principles, which aim to make the code easier to maintain and evolve. These principles encourage limiting the responsibilities of each class, adding features without modifying the existing, avoiding overly rigid dependencies and using interfaces adapted to the real needs of the software.

These principles are in line with the messages of the AI conference. An agent can speed up certain tasks, but quality always depends on design rules, tests, and reviews. Marc Lecanu emphasizes unit testing, integration testing, end-to-end testing, load testing, TDD (Test-Driven Development), coverage checks and dependency scans to detect vulnerabilities.

The two conferences thus converge towards the same conclusion: AI increases the execution capacity of teams, but it also strengthens engineering requirements. Crédit Agricole presents an approach where agents, specifications, Clean Code, tests, CI/CD and governance are not separate topics. They form the conditions for a faster software cycle, without sacrificing the readability, maintainability and quality expected in a banking environment.